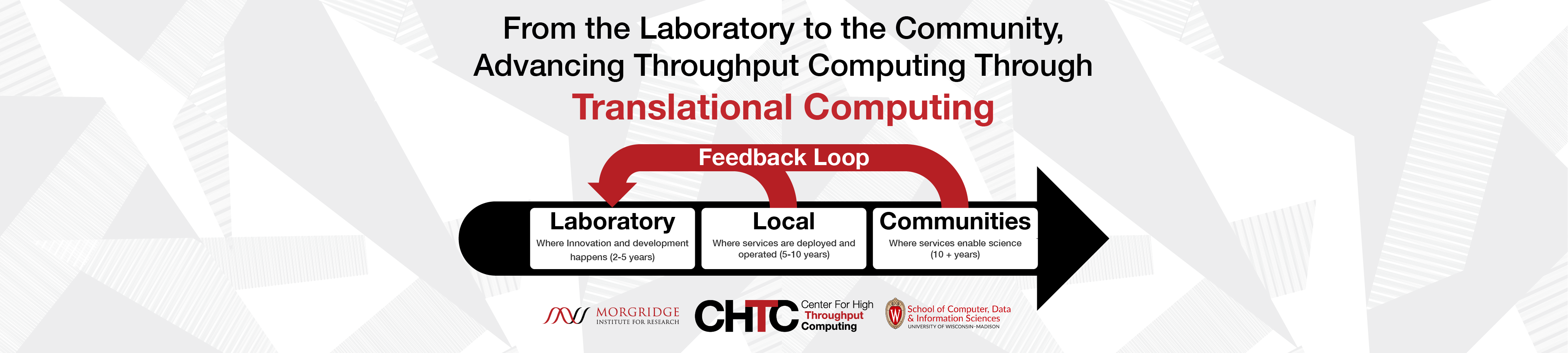

The Center for High Throughput Computing (CHTC), established in 2006, aims to bring the power of High Throughput Computing to all fields of research, and to allow the future of HTC to be shaped by insight from all fields.

Are you a UW-Madison researcher looking to expand your computing beyond your local resources? Request an account now to take advantage of the open computing services offered by the CHTC!

High Throughput Computing is a collection of principles and techniques which maximize the effective throughput of computing resources towards a given problem. When applied for scientific computing, HTC can result in improved use of a computing resource, improved automation, and help drive the scientific problem forward.

The team at CHTC develops technologies and services for HTC. CHTC is the home of the HTCondor Software Suite which has over 30 years of experience in tackling HTC problems; it manages shared computing resources for researchers on the UW-Madison campus; and it leads the OSG Consortium, a national-scale environment for distributed HTC.

Upcoming Events

News

Last Year Serving UW-Madison

HTCSS

The HTCondor Software Suite (HTCSS) provides sites and users with the ability to manage and execute HTC workloads. Whether it’s managing a single laptop or 250,000 cores at CERN, HTCondor helps solve computational problems through the application of the HTC principles.

Pelican

A data transfer service, Pelican facilitates the ability for researchers to access and transfer data from where it is stored to where it needs to run. Inspired by the 2022 White House Office of Science and Technology Policy (OSTP) proposition to make all government funded research data publicly accessible, Pelican works to efficiently transfer this open data to researchers who require it. Pelican provides an open-source software platform for federating dataset repositories together and delivering the objects to computing capacity such as the OSPool.

Services

UW Research Computing

CHTC manages over 20,000 cores and dozens of GPUs for the UW-Madison campus; this resource, which is free and shared, aims to advance the mission of the University of Wisconsin in support of the Wisconsin Idea. Researchers can place their workloads on an access point at CHTC and utilize the resources at CHTC, across the campus, and across the nation.

Research Facilitation

CHTC’s Research Facilitation team empowers researchers to utilize computing to achieve their goals. The Research Facilitation approach emphasizes teaching users skills and methodologies to manage & automate workloads on resources like those at CHTC, the campus, or across the world.

As part of its many services to UW-Madison and beyond, the CHTC is home to or supports the following Research Projects and Partners.

Morgridge Institute for Research

The Morgridge Institute for Research is a private, biomedical research institute located on the UW-Madison campus. Morgridge’s Research Computing Theme is a unique partner with CHTC, investing in the vision of HTC and its ability to advance basic research.

PATh

The Partnership to Advance Throughput Computing (PATh) is a partnership between CHTC and OSG to advance throughput computing. Funded through a major investment from NSF, PATh helps advance HTC at a national level through support for HTCSS and provides a fabric of services for the NSF science and engineering community to access resources across the nation.

OSG

The OSG is a consortium of research collaborations, campuses, national laboratories and software providers dedicated to the advancement of all of open science via the practice of distributed High Throughput Computing (dHTC), and the advancement of its state of the art. The OSG operates a fabric of dHTC services for the national science and engineering community and CHTC has been a major force in OSG since its inception in 2005.